Blue Team Focus: This guide is aimed at defenders who want a practical way to collect network telemetry, centralize logs, hunt threats, and investigate incidents in one platform.

Security Onion is a Linux distribution built for network security monitoring, log management, and threat hunting. The most accurate way to understand it is as a pre-integrated defensive stack rather than a single product. It combines packet capture, network metadata generation, signature-based detection, indexing, hunt workflows, and case management so that analysts can move from raw evidence to validated conclusions with less operational friction.

Teams often have enough tools but still struggle to get consistent outcomes because each tool stores and presents data differently. Security Onion reduces that integration burden by establishing a common operational pipeline. Traffic is collected, transformed into useful telemetry, checked for known malicious behavior, indexed for search, and made available inside analyst workflows that support triage and investigation.

What Security Onion Adds Beyond Basic Monitoring

Security Onion is not only about seeing packets. It is about turning packet-level visibility into usable investigation context. Full packet capture preserves ground truth when you need to reconstruct events at protocol level. Metadata from Zeek and Suricata provides structure that makes broad hunting possible without manually reading every packet. Signature-based detection from Suricata provides fast alerting for known threats. File extraction and analysis expands visibility into transferred content and payload behavior. Case workflows keep related evidence together so that investigations remain coherent over time.

This integrated model is important because defenders do not investigate threats in one linear view. They pivot between alert artifacts, host signals, network metadata, and packet evidence. When those pivots happen inside one platform, the time from detection to validated understanding is shorter, and the quality of analysis is usually higher.

At conceptual level, Security Onion combines three defensive disciplines that are often separated in practice. Network Security Monitoring gives visibility into communications and protocol behavior. Security Information and Event Management style indexing makes large telemetry sets searchable at operational speed. Threat hunting provides the analytical method to test hypotheses against collected evidence. When these three parts are fused in one data plane, analysts can move from raw events to validated findings with fewer translation gaps.

The Monitoring Model and Data Plane

In practical deployments, Security Onion receives network traffic from a span or tap interface and optionally ingests additional host or log sources. That traffic is processed by sensors such as Zeek and Suricata, producing both summarized protocol activity and alert output. Processing services then normalize and route this telemetry into indexed storage so that analysts can query, correlate, and investigate.

The useful distinction is that Security Onion preserves both detail and abstraction at once. If an alert raises suspicion, metadata gives immediate context, and pcap remains available for deep forensic review. This balance lets defenders investigate quickly without giving up fidelity when deeper validation is required.

There is an important security concept behind this design. Signature detection and behavioral analysis are complementary, not competing, controls. Signature engines identify known malicious patterns quickly, which helps reduce response time for established threats. Behavioral metadata supports detection of novel or modified activity that no signature currently covers. Full packet retention acts as evidence assurance, allowing teams to revalidate assumptions when new intelligence arrives or when an investigation is challenged.

Analyst Workflow and Operational Value

The analyst experience is where Security Onion becomes more than a telemetry collector. Alert views provide a starting point for triage. Hunt interfaces support hypothesis-driven queries over indexed data. Case handling allows evidence grouping, annotation, and investigation continuity. Packet access provides a final source of truth when protocol-level validation is needed. System and node visibility support operational reliability so analysts can trust that collection and processing are healthy.

This workflow consistency is often more valuable than any individual detection rule. Many platforms can generate alerts, but fewer platforms support a smooth transition from alert to context, from context to evidence, and from evidence to defensible reporting inside one environment.

There is also an analytical discipline embedded in this workflow. Triage is not only about deciding whether an alert is true or false. It is about assigning investigative value under uncertainty. A low-confidence alert with high adversary relevance can be more important than a high-confidence alert tied to commodity scanning noise. Security Onion helps with that prioritization because it keeps context close to the alert. Metadata, packet evidence, and related detections can be reviewed quickly enough that analysts can make better judgment calls before queue pressure pushes them to close cases too early.

Detection Engineering in Security Onion

Detection engineering in this platform should be treated as an iterative feedback loop rather than a one-time rule import exercise. Suricata rules provide immediate coverage for known patterns, but rule fidelity depends heavily on local environment behavior, protocol mix, and traffic baseline. Zeek metadata provides the substrate for more behavior-driven detections, especially when signatures are evaded through infrastructure churn, payload mutation, or protocol abuse that remains syntactically valid.

A mature approach combines curated signatures with telemetry-derived hypotheses. Analysts first identify recurring suspicious patterns in metadata, then validate them against packet evidence, and finally codify detection logic through rules, queries, or dashboards. Over time, this process shifts defensive posture away from pure indicator chasing and toward adversary behavior tracking. The practical advantage is durability. Infrastructure indicators rotate quickly, but communication patterns, protocol misuse, and execution tradecraft tend to persist longer.

False positives should be handled as engineering debt, not analyst inconvenience. Every noisy detection consumes response capacity and reduces trust in the alerting layer. Security Onion supports tuning through query validation and contextual pivots, which makes it possible to refine detections against real environment behavior before promoting them into high-priority alerts.

Threat Hunting Methodology on the Platform

Threat hunting in Security Onion is most effective when driven by explicit hypotheses that can be disproven by telemetry. A strong hypothesis ties adversary intent to observable behavior. For example, a hypothesis about command-and-control activity might be translated into expectations around beacon cadence, unusual DNS resolution patterns, JA3 or JARM clustering, and protocol anomalies at service boundaries.

The platform supports this method because hunters can traverse multiple evidence layers without changing systems. A query can begin in indexed metadata, pivot to related alerts, then validate uncertainty through packet review. If supporting host telemetry exists, the same investigation can expand into endpoint context to test whether network behavior aligns with process activity, persistence artifacts, or credential access patterns.

This cross-layer validation is a core security concept. High-confidence detection is usually not produced by one log line. It is produced by coherent evidence chains across independent telemetry sources. Security Onion makes that chain-building practical at operational speed.

Common Failure Modes and How to Avoid Them

Most failed deployments do not fail because the software stack is incapable. They fail because architecture, operations, and detection strategy are mismatched. A common issue is overloading standalone deployments with production traffic. When ingestion, indexing, and query workloads compete on one host, analysts experience degraded search latency exactly when investigation demand is highest.

Another common issue is assuming default rules are sufficient. Default coverage is a starting point, not an outcome. Without environment-specific tuning, teams either drown in noisy alerts or suppress too aggressively and miss meaningful activity. A third issue is weak data hygiene. Inconsistent time synchronization, incomplete interface visibility, or missing host telemetry produces blind spots that undermine confidence in conclusions.

Security Onion can mitigate these risks, but only if operational controls are treated as first-class requirements. Capacity planning, time sync integrity, retention policy design, and role-based access control are security controls in this context, not administrative afterthoughts.

From Lab Confidence to Production Readiness

A lab proves functional capability, but production requires reliability under stress. The transition should be treated as a staged engineering program. Teams first validate data flow correctness, then measure indexing and query performance under realistic burst conditions, and finally test investigation continuity through tabletop and live-fire scenarios.

Production readiness should also include failure testing. Teams need to understand what happens when a forward node drops, when queue depth spikes, or when one search node becomes unavailable. The goal is not perfect uptime. The goal is graceful degradation with preserved investigative integrity.

This is where distributed architecture earns its complexity cost. By separating collection, processing, and search roles, teams gain the ability to scale and recover by function. That function-level resilience is one of the most important differences between a useful lab and a dependable security operations platform.

Measuring Security Onion Effectiveness

The most meaningful question is not whether the dashboard looks active. The meaningful question is whether defensive decisions are improving. Useful measurement focuses on detection precision, triage latency, investigation completion quality, and post-incident learning velocity. If alerts increase but investigation quality drops, monitoring maturity is not improving.

A practical metric model ties platform output to operational outcomes. Detection quality should be evaluated by true positive yield and suppression hygiene. Hunt value should be evaluated by hypothesis quality and finding durability. Incident support value should be evaluated by how quickly analysts can assemble defensible evidence chains.

When Security Onion is measured this way, it becomes clear that the platform is not only a telemetry repository. It is an intelligence and response accelerator. That framing keeps teams focused on security outcomes rather than tooling activity.

Architecture Selection for Real Environments

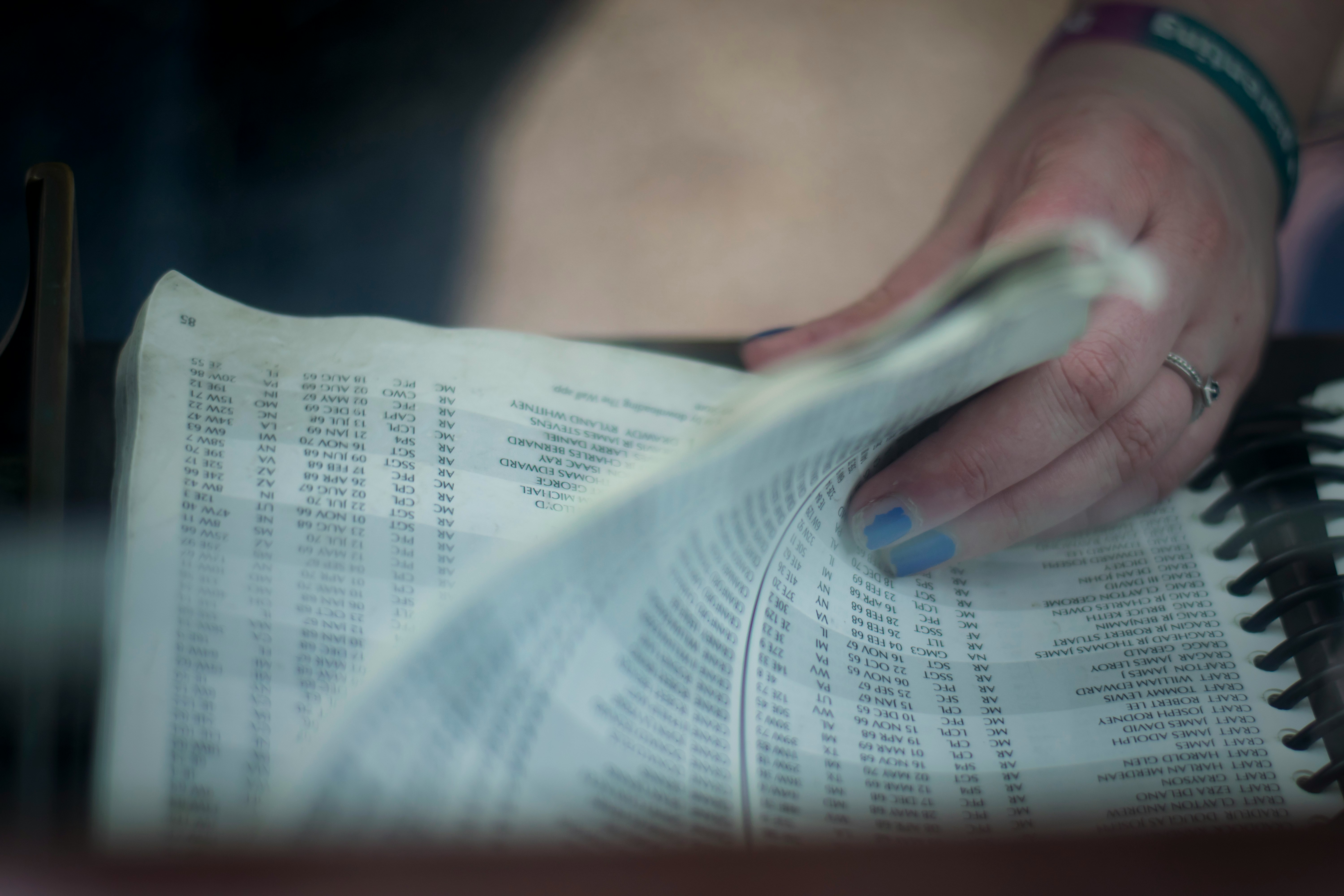

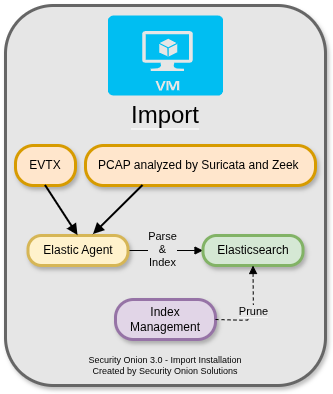

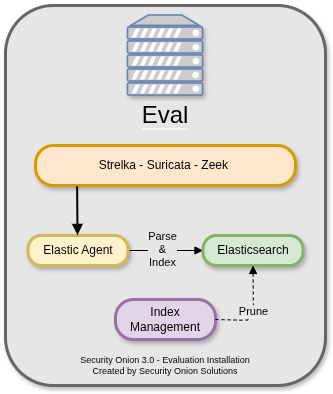

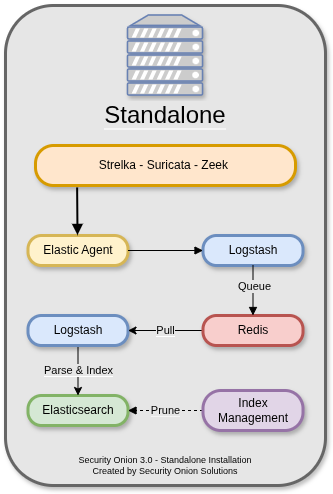

Security Onion supports import, evaluation, standalone, and distributed architectures. Import mode is useful for offline packet analysis and controlled training datasets. Evaluation mode adds live sniffing for validation in temporary environments. Standalone mode keeps deployment simple by consolidating services on one host, which is useful for labs and small proofs of concept. Distributed mode separates collection, management, and search functions across multiple nodes, which is the model generally favored for production because it scales more predictably.

The core architecture decision depends on traffic volume, retention goals, search latency requirements, and operational maturity. A small lab can learn effectively with standalone. A production deployment generally benefits from distributed node roles, where forward nodes collect and preprocess telemetry, management services coordinate and store operational state, and search nodes handle indexing and query performance.

Import Architecture

Import architecture is built for deterministic replay. Teams load packet captures, run Zeek and Suricata processing, and evaluate detection outcomes against known datasets. This model is highly effective for analyst training, incident postmortem exercises, and validation of new hunting queries because the traffic corpus remains stable across repeated analysis cycles.

The underlying security concept is reproducibility. A reproducible telemetry set allows teams to measure whether a detection change improved recall or only shifted noise. The tradeoff is that import mode has no live defensive coverage, so it should be treated as a validation environment and not an active protection layer.

Evaluation Architecture

Evaluation architecture extends import workflows with live sniffing through a monitor interface. It is the fastest route to understanding real environment behavior, especially alert volume, protocol distribution, and data quality. This architecture is useful when teams need rapid confidence in sensor placement and baseline tuning before committing to production-scale topology.

The key concept here is pre-production risk reduction. Evaluation mode helps uncover blind spots, false positive pressure, and packet visibility issues while change cost is still low.

Standalone Architecture

Standalone architecture consolidates sensor processing, transformation pipelines, queueing, indexing, and analyst access on one host. It simplifies operations and is often sufficient for small environments or controlled labs. Its main limitation is coupled resource contention. Under ingest spikes, packet processing, indexing, and analyst queries compete for the same compute and storage resources.

The security concept is coupled failure domains. Because data plane and analyst plane share infrastructure, one performance failure can degrade both visibility and investigation capability simultaneously.

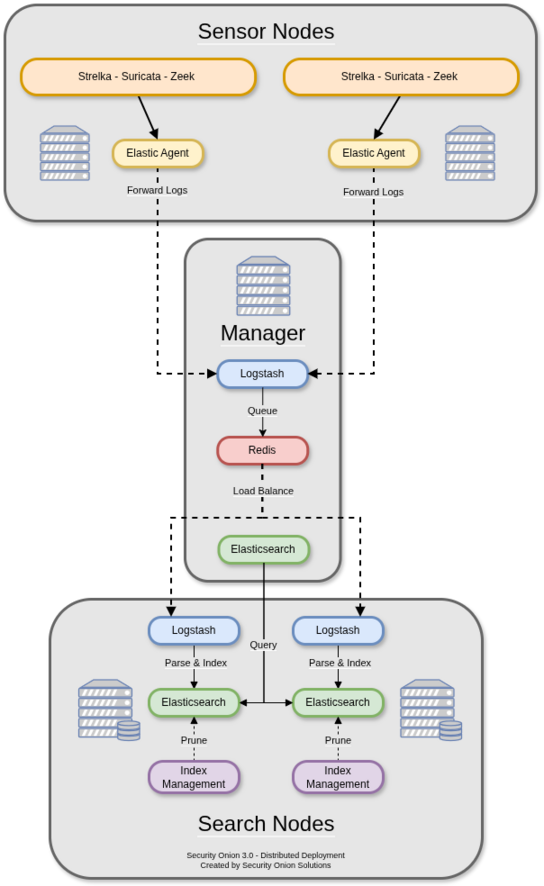

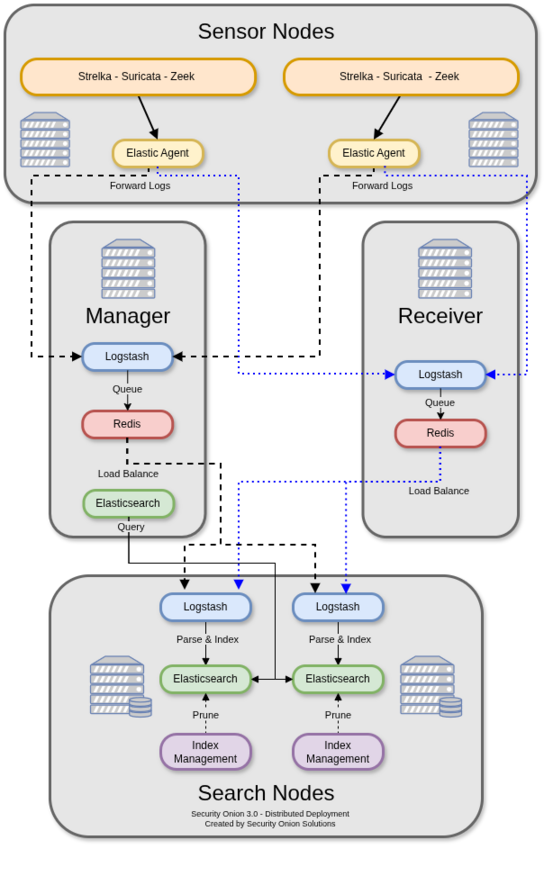

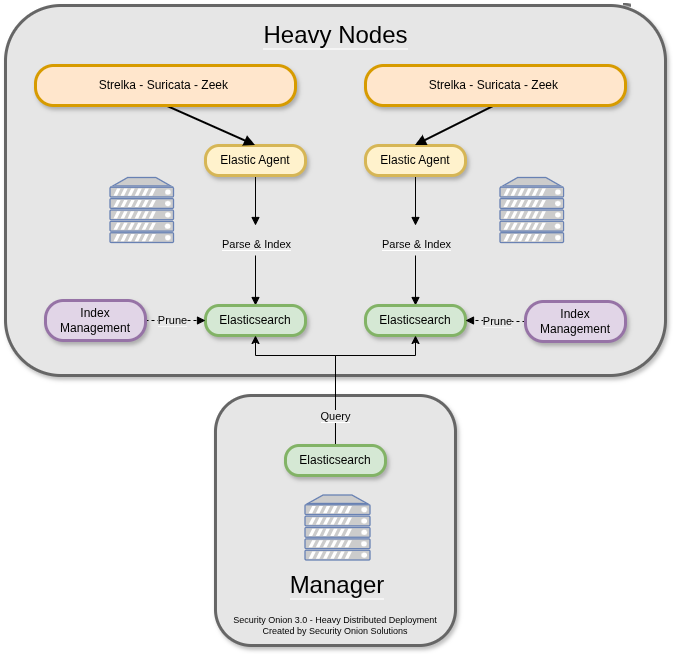

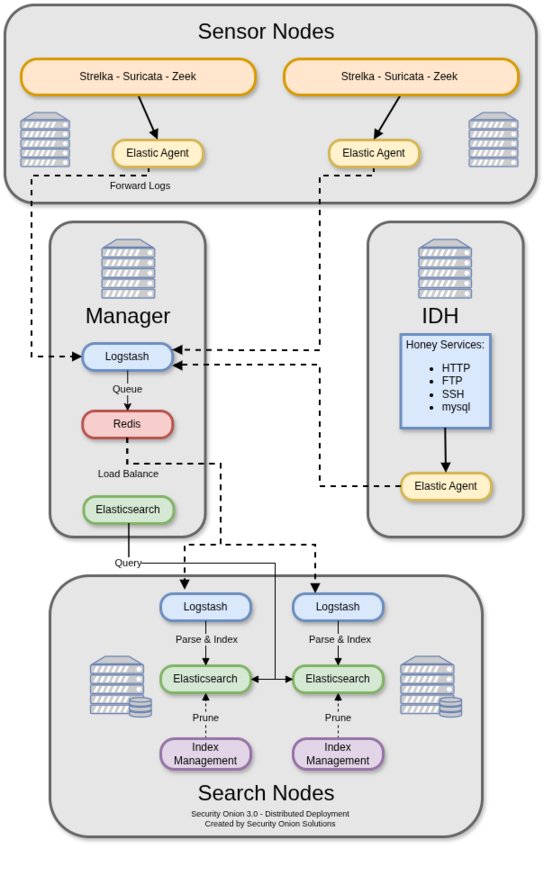

Distributed Architecture

Distributed architecture separates collection, management, and search into independent node roles. Forward nodes collect traffic and host telemetry near observation points. Management services coordinate ingestion and operational state. Search nodes scale indexing and query throughput independently. This model aligns with production operations where throughput, retention, and investigative concurrency change over time.

The security concept is fault isolation with horizontal scalability. Queue-backed role separation helps preserve ingest continuity and analyst query quality during incident-driven bursts.

Node Types and Their Security Roles

Management Node

The management node is the control anchor of the deployment. It coordinates central ingress, platform configuration, and core operational orchestration. Its compromise risk is high because it can influence telemetry flow and analyst trust simultaneously. Administrative segmentation, strong authentication, and strict role-based access are mandatory controls at this layer.

Search Node

Search nodes provide indexed evidence retrieval at operational speed. They are responsible for query responsiveness, index lifecycle behavior, and hunt scalability under load. When search performance degrades, investigation quality usually degrades with it because analysts narrow scope prematurely. Capacity planning and index hygiene on these nodes are therefore defensive quality controls.

Forward Node

Forward nodes define the visibility edge of the platform. They run packet and telemetry collection nearest to monitored segments and execute first-stage sensor processing. If forward placement is incomplete or packet fidelity is poor, downstream analysis cannot recover missing evidence. In practice, forward node design is where theoretical coverage becomes real coverage.

Fleet Standalone Node

Fleet standalone nodes offload endpoint management operations from core monitoring services. This separation reduces control-plane contention during endpoint enrollment, policy updates, and host telemetry bursts. In mature deployments, this role improves stability by isolating endpoint lifecycle workload from central ingest and search paths.

Receiver Node

Receiver nodes add resilience to event intake and can preserve ingestion continuity when primary manager paths are strained. This role matters during high-volume incidents, where data loss during the first hours can permanently reduce investigative confidence.

Honeypot Node

Honeypot nodes provide controlled exposure surfaces to collect high-signal unsolicited interactions. They should be treated as enrichment sensors, not replacements for core telemetry. Their value is asymmetry: attacker interaction itself becomes context that can inform prioritization and hunt hypotheses.

References

https://docs.securityonion.net/en/3/main/architecture/

Installation and Trust Baseline

Security Onion can be installed through ISO media, package-based installation on supported Linux distributions, or cloud images in major cloud providers. The installation method should match your environment constraints, but the trust baseline should remain the same in all cases. Verify installation artifacts before deployment and treat monitoring infrastructure as high-trust security infrastructure from the start.

Treat the monitoring plane as a high-value target in its own right. The platform stores sensitive telemetry, detection logic, and incident context. From a security architecture perspective, that means integrity and access control are as important as collection depth. Isolating management access, applying strict role-based permissions, and maintaining patch hygiene are not optional hardening tasks. They are prerequisites for trusting investigative outcomes.

gpg --verify <sig-file> <iso-file>

Why Security Onion Remains Practical

Security Onion remains valuable because it shortens the distance between collection and analysis. Instead of spending effort integrating disconnected tooling, teams can focus on detection quality, hunt hypotheses, and incident understanding. The platform does not remove the need for strong analyst judgment, but it gives analysts a more coherent environment for applying that judgment.

For learners, Security Onion makes defensive architecture tangible by showing how packet capture, metadata, alerting, search, and case workflows fit together. For operators, it provides a practical path to scale monitoring with clearer separation of roles and stronger investigation continuity.

In mature teams, that continuity becomes the deciding factor. Technology alone does not produce resilient defense. Consistent evidence flow, disciplined detection engineering, and repeatable investigative method do. Security Onion is valuable because it supports all three in one operational model.